- Frontpage

- Image

- Authors

- Table of contents

- List of Abbreviations

- 1. Preface

- 2. Partners and collaborators

- 3. Workshop summary and outcome

- 3.1 Background of the workshop

- 3.2 Objectives of the workshop

- 3.3 Mapping of current knowledge for NAMs

- 3.3.1. Survey

- 3.3.2. Workshop poll

- 3.4. Summary of workshop

- 3.5. Conclusions

- 3.6. Specific recommendations from the workshop

- 3.7. Ongoing and follow-up NAMs activities

- 3.8. Workshop evaluation

- 4. Biographies of the speakers

- 5. Summary of presentations

- 5.1. Introduction to New Approaches Methodologies (NAMs)

- 5.2. Introduction to omics

- 5.3. Towards regulatory applications of molecular mechanistic data

- 5.4. An introduction to EPA CompTox Dashboard and GenRA Read-Across module

- 5.5. Challenges of NAMs in the real world 1

- 5.6. Challenges of NAMs in the real world 2

- 5.7. Plenary discussion training needs

- 5.8. Experience of ECHA in applying NAMs in a regulatory context

- 5.9. Omics-based grouping/read-across (G/RAx): A case study with azo dyes

- 5.10. Experience ECHA in applying NAMs in a regulatory context

- 5.11. Variability in repeat dose toxicity studies

- 5.10. Experience ECHA in applying NAMs in a regulatory context

- 5.12. Plenary discussion

- 5.13. Grouping and read-across case studies from EU-ToxRisk

- 5.14. ECHA’s experiences with NAMs in the assessment of chemical groups

- 5.15. EU-ToxRisk Case study 11

- 5.16. Orientation on relevant projects

- 5.17. H2020 PrecisionTox omics-based G/RAx case study in the making

- 6. Acknowledgements

- 7. Conflict of interest

- Appendix 1: Programme

- Appendix 2: Supplementary figures

- About this publication

MENU

Nordic Workshop on New Approach Methodologies (NAMs)

for Grouping and Read-Across under REACH and CLP

November 9th – 11th 2021

Dr Marcin W. Wojewodzic and Dr Monica Andreassen

Table of contents

This publication is also available online in a web-accessible version at https://pub.norden.org/temanord2022-526.

List of Abbreviations

ADME - absorption, distribution, metabolism, and excretion

AOP - Adverse Outcome Pathway

ASPIS cluster - “Animal-free Safety assessment of chemicals: Project cluster for Implementation of novel Strategies”. ONTOX, PrecisionTox and RISKHUNT3R are part of the ASPIS cluster

CLP - Classification, Labelling and Packaging

CSS - The Chemical Strategy for Sustainability

DNEL - Derived No-Effect Level

EC - European Commission

ECHA - European Chemicals Agency

G/RAx - Grouping/Read-Across

GMT - Group Management Team

IATA - Integrated Approaches to Testing and Assessment

KE – Key Event

LOAEL - Lowest-Observed-Adverse-Effect Level

MAD - Mutual Acceptance of Data

MIE – Molecular Initiating Event

MoA - Mode of Action

NAM - New Approach Methodology

NGRA - Next Generation Risk Assessment

NOAEL - No-Observed-Adverse-Effect Level

OECD - The Organisation for Economic Co-operation and Development

Omics - Transcriptomics, metabolomics, proteomics, epigenomics etc.

ONTOX - NAM Project funded by H2020 Programme. Coordinator is Prof Mathieu Vinken

PBPK - Physiological Based Pharmacokinetic modelling and simulation

POD - Point of Departure

PrecisionTox - NAM project funded by H2020 Programme. Coordinator is Prof John K. Colbourne

QSAR - Quantitative Structure-Activity Relationship

qIVIVE - Quantitative In Vitro to In Vivo Extrapolation

REACH - Registration, Evaluation, Authorisation and Restriction of Chemicals

RISKHUNT3R - NAM Project funded by H2020 programme. Coordinator is Prof Bob van de Water

1. Preface

New Approach Methodologies (NAMs) are a rapidly growing field of toxicology, offering a step away from the intensive use of animals in determining the hazard and toxicity of chemicals, and performing risk assessment for human health in the 21st century. Several major legal instruments have recently been put in place to reduce the use of animals in testing (including data sharing, grouping of chemicals, joint submission, and other adaptation possibilities of REACH, such as Annex XI). These initiatives have allowed NAMs to gain momentum, further helped by a growing interest in the implementation of Next Generation Risk Assessment (NGRA) principles.

There is an increasing trend to make regulations around NAMs and activities which will help ensure the smooth passage of NAMs into common practice. There have been government initiatives among regulatory agencies from North America, Europe, and Australia whose aim is to accelerate the pace of chemical risk assessment by using NAMs.

However, in the transition phase towards the use of these methods by legal authorities, the transfer of knowledge and competence within authorities is required. NAMs will be a very important point on the agenda in the EU for many years to come and, therefore, the Nordic countries need to be prepared for this shift. This transfer of knowledge should happen in parallel to the development of innovative and robust NAMs.

The Nordic workshop on NAMs was organised by the Norwegian Environment Agency, Nordic risk assessment project (NORAP) and the Nordic Classification Group (NKGI) and took place between the 9th and the 11th of November 2021. The workshop was organised as a webinar. The project was funded by the Nordic Council of Ministers and supported by the Nordic Working Group for Chemicals, Environment, and Health.

The target group of the workshop was hazard and risk assessors in the Nordic countries working with REACH and CLP. The aim of the workshop was to decrease the knowledge gap in the participants, to increase understanding of new methods utilising molecular mechanistic data (especially omics approaches), and to support their use in grouping and read-across methods. It also aimed to identify the needs in the Nordic countries for further capacity building, and guidance in NAM approaches, especially in a regulatory context.

Finally, the workshop aimed to highlight the current main regulatory challenges for NAMs, to exchange opinions and discuss possible ways forward for using NAMs, and to increase the level of knowledge and competence within the relevant authorities in the Nordic countries.

2. Partners and collaborators

The steering group of the workshop constituted Dr Hubert Dirven, Dr Birgitte Lindeman (both Norwegian Institute of Public Health, Norway), Dr Tomasz Sobański (European Chemicals Agency, Finland), Prof Mark R. Viant, Prof John K. Colbourne (both Michabo Health Science Ltd and the University of Birmingham, UK), Dr Daniel Borg (Swedish Chemicals Agency, Sweden and Nordic Risk Assessment Project), Tor Øystein Fotland (Norwegian Environment Agency, Norway and Nordic Risk Assessment Project) and Ann Kristin Larsen (Norwegian Environment Agency, Norway and Nordic Classification Group). The project manager and executive chair of the meeting was Marianne van der Hagen (Norwegian Environment Agency, Norway). The workshop was funded by the Nordic Council of Ministers.

Dr Marcin W. Wojewodzic and Dr Monica Andreassen (both Norwegian Institute of Public Health, Norway) were rapporteurs.

3. Workshop summary and outcome

3.1 Background of the workshop

The field of toxicology and toxicity testing of chemicals is rapidly advancing with the introduction of novel in chemico, in vitro, and in silico methods that have the potential to replace or complement the current animal experiment-based methods. Alongside the growth of New Approach Methodologies (NAMs) there is a growing demand for chemical hazard and risk assessors who have expertise in utilising the data generated from these methods. The participants represented various regulatory bodies of Finland, Norway, Denmark, and Sweden. Many of the participants perform risk assessments for their national authorities.

3.2 Objectives of the workshop

The workshop’s main aim was to increase the risk assessors’ understanding of how to use data produced by NAMs for assessing chemical hazards, within the Nordic countries. It also aimed to improve the assessors’ ability to use NAMs data in drafting proposals for regulatory actions under the chemical regulations REACH and CLP.

The European Chemicals Agency (ECHA) defines NAMs as methods that bring greater robustness, throughput and/or mechanistic knowledge into risk assessment, enabling more relevant decision making for human health and the environment. Aligned to this definition, the focus in the workshop was on new methods utilising molecular mechanistic data to support grouping and the read-across of chemicals.

This workshop also served to facilitate a discussion about the identified obstacles in the implementation of NAMs in regulatory processes and to identify strategies for increasing their use in read-across and grouping processes. The Norwegian Institute of Public Health (NIPH) drafted this report after the workshop, detailing the recommendations and needs for further actions by ECHA.

3.3 Mapping of current knowledge for NAMs

3.3.1. Survey

To identify the opportunities and challenges of NAMs in the real world, the participants were invited to complete an online survey upon registration for the workshop (5.5). The survey revealed that 64% of the respondents had some familiarity with the use of NAMs in a regulatory context. Respondents were familiar with grouping and read-across in regulatory decision making, and QSAR was the NAM most often used. Respondents identified both opportunities and challenges to include NAMs in grouping and read-across. Among opportunities seen by participants, NAM data could substitute regulatory toxicity observations of apical end points with observations of chemical modes-of-actions (MoAs) data, help improve hazard assessment, reduce animal testing, save money, and generate useful data when building a weight-of-evidence model. On the other hand, missing data as well as a lack of regulatory acceptance and validation of methods were reported as challenges in using NAMs for grouping and read-across

3.3.2. Workshop poll

To assess the participants’ prior knowledge about NAMs a comprehensive poll was conducted on day 1 of the workshop. The poll was repeated at the end of the workshop. The interpretation of the results is affected by unequal numbers of pre- and post- workshop responders (max of 36 versus 26 respectively). Due to this difference we cannot directly compare the pre- and post-workshop poll results.

Prior to the workshop, the participants were already familiar with some of the NAMs. As expected, many participants already had some experience with the ‘QSARs’, and ‘read-across’ techniques. Methods such as ‘IATAs‘ and ‘defined approach’ were also somewhat familiar to the participants. Most of the participants had already heard about the use of systematic reviews, but fewer had experience using it. Some participants also had experience with Adverse Outcome Pathways (AOPs) (Appendix 2, Figure 1).

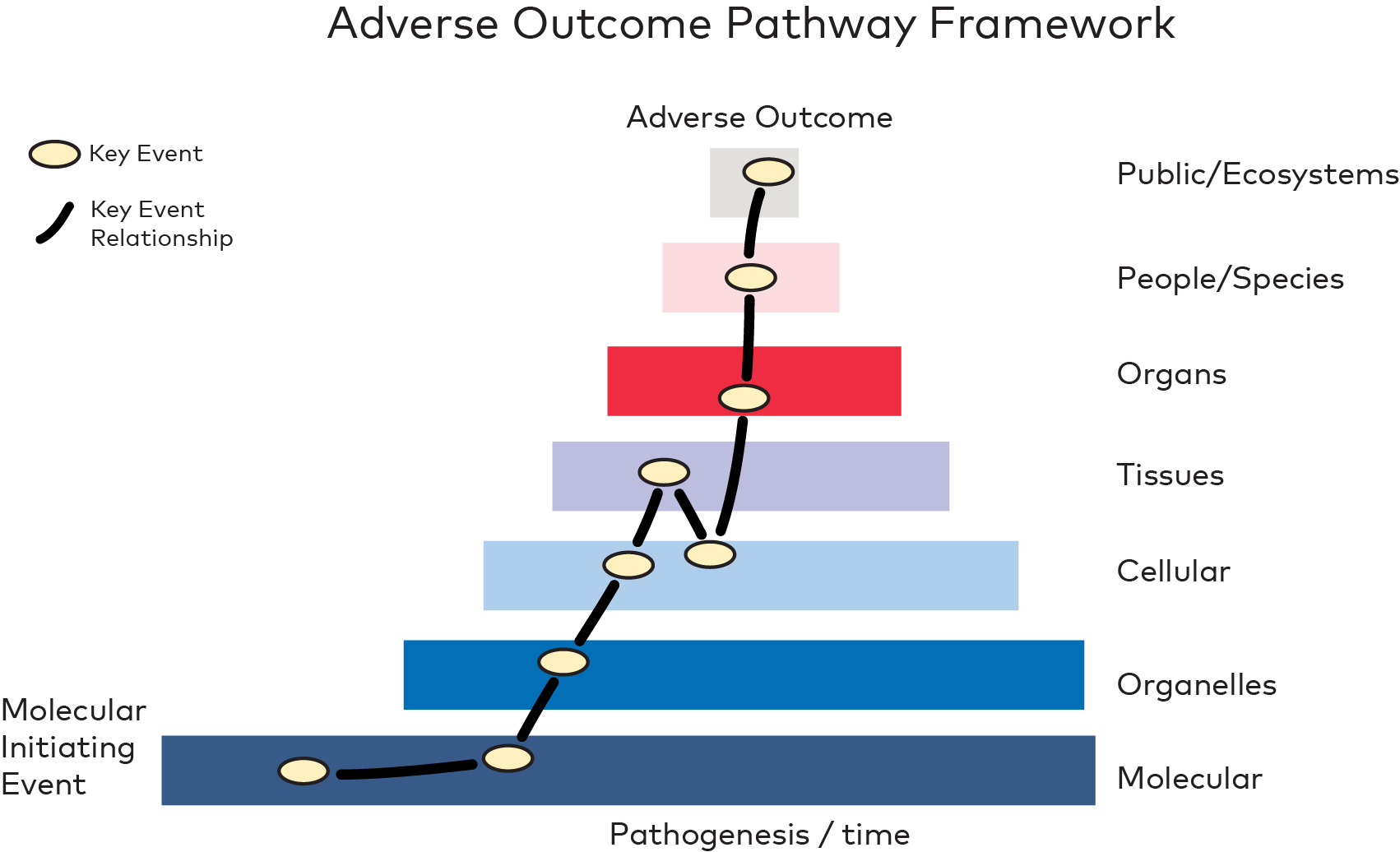

The participants recognised opportunities of NAMs for many areas within toxicology in the next 5 years (Appendix 2, Figure 2). This was especially true for ‘read-across’ and ‘grouping’ methods that were of the main focus for this workshop. Also ‘sensitisation’, ‘irritation’, ‘endocrine disruptors’ and ‘genotoxicity’ were seen with large opportunities. After the workshop, the participants reported even larger opportunities in these areas (Appendix 2, Figure 5).

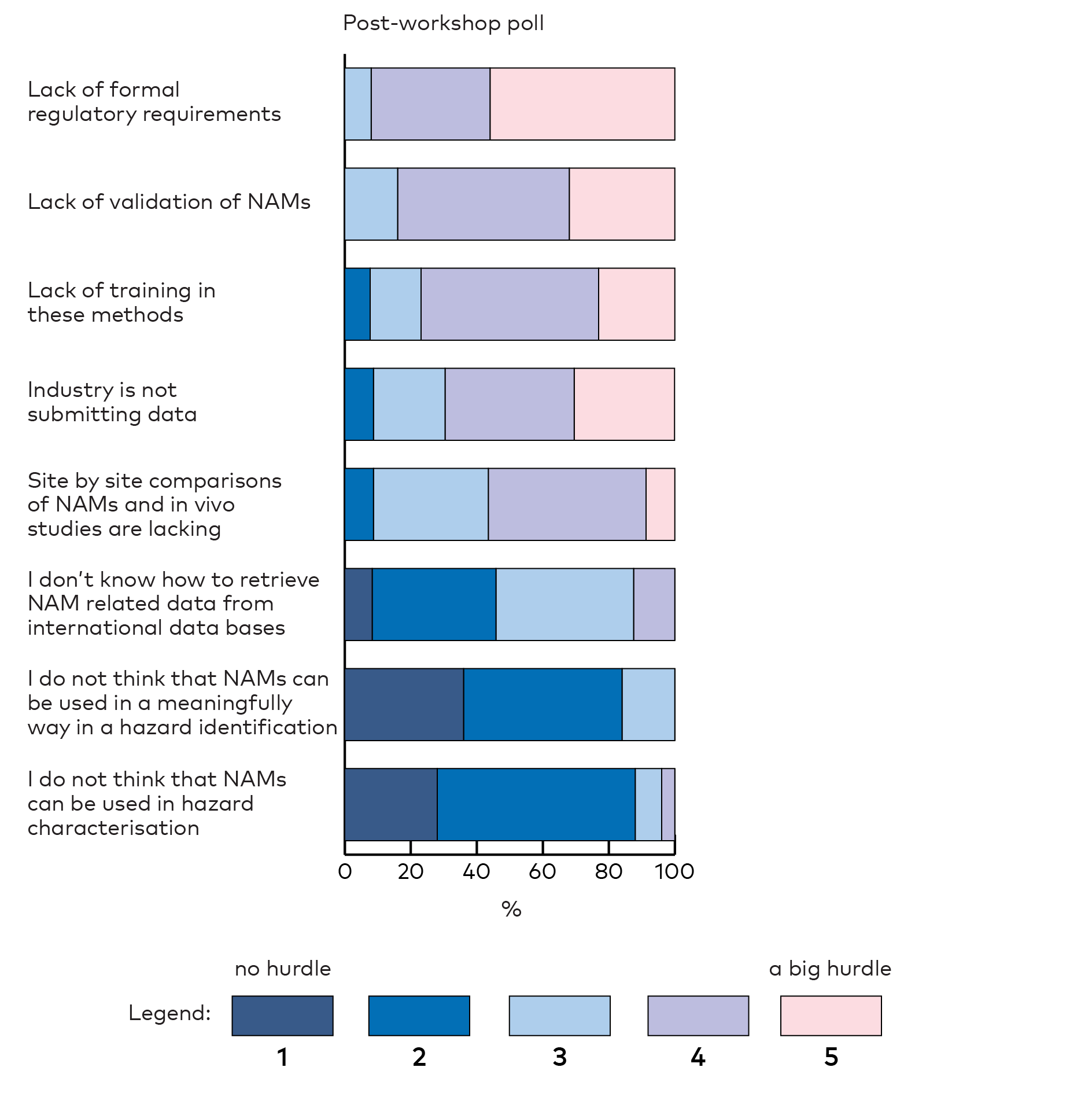

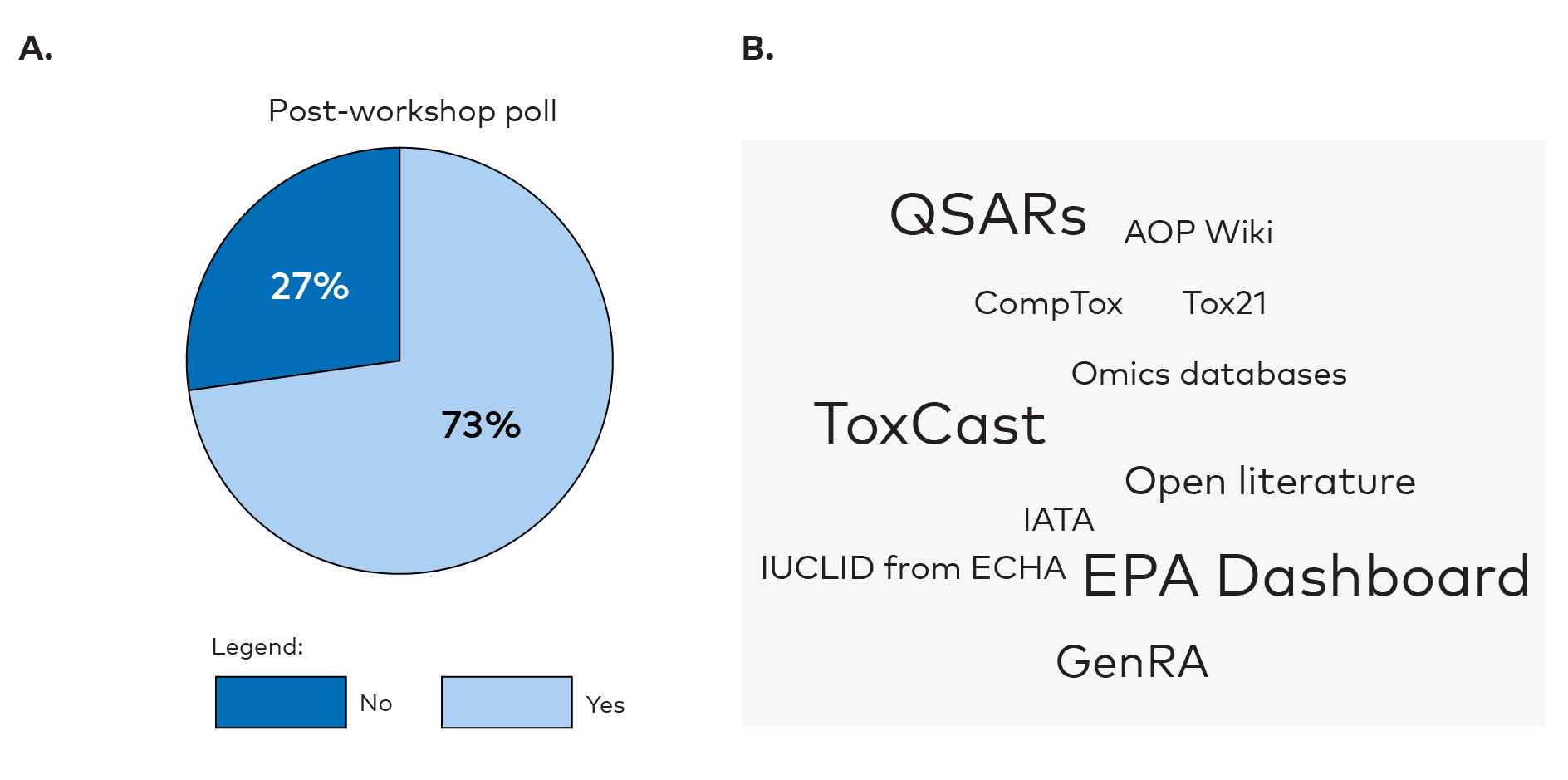

The participants were asked to identify the biggest hurdles in using NAMs for hazard assessments. They perceived the lack of formal regulatory requirements of NAMs and lack of validation of NAMs as big hurdles both before (Appendix 2, Figure 3) and after (Appendix 2, Figure 6) the workshop. This was not surprising, as these hurdles were discussed during the workshop. Lack of training in NAMs was also seen as a big hurdle suggesting a desire for more training and education. Further, the lack of industries submitting their data for reuse by others and site-by-site comparisons of NAMs and in vivo studies were indicated as hurdles. The polls also indicated that the participants had gained knowledge on how to retrieve NAM related data from international data series after the workshop (reported as less of a hurdle in the post-workshop poll). Knowledge about freely available databases was low among the participants in the pre-workshop poll (22%) (Appendix 2, Figure 4) but had increased after the workshop (73%) (Appendix 2, Figure 7). The participants were aware of various data sources like QSARs, ToxCast, EPA Dashboard, CompTox, AOP Wiki, Tox21, Vega and GenRA. Dashboard and GenRA stood out in particular after the workshop.

3.4. Summary of workshop

Introduction NAM definition is still evolving

As a newly emerging field, the definition of NAMs is still evolving. Three complementary definitions were presented by Prof Colbourne (5.1). He pointed out that these definitions are still under development, owing to scientific advances as well as the way different regulatory bodies perceive NAMs today. The definition in a regulatory context was more concrete and precise, probably recapitulating the legal needs behind future implementations of such methods. The scientific definition was much broader, reflecting the rapid pace of discoveries and innovation happening right now in this field. Independent of the presenters, the vision remained the same and advocates for the Replacement, Reduction, and Refinement of animal testing (the 3Rs) approach while maintaining the ultimate goal of protecting humans and the environment from chemical hazards. Dr Escher and Prof de Water also presented a common vision for human Next Generation Risk Assessment (NGRA), where hazard characterisation identification (currently done using animal tests) could be replaced by batteries of NAMs (5.13, 5.15).

NAM gains momentum

In the introductory lecture to NAMs, Prof Colbourne presented the main driving forces, in Europe and worldwide, for introducing NAMs into regulatory contexts. The main legal instruments to avoid animal testing are already in place, as reviewed by Prof Colbourne (5.1). He also highlighted the ongoing paradigm of moving away from extensive animal testing. This paradigm shift was reflected later on in the presentation of ongoing relevant projects in Europe (5.16). Most notably, the ASPIS cluster (Animal-free Safety assessment of chemicals: Project cluster for Implementation of novel Strategies) was created as a collaboration of the H2020 funded projects: ONTOX, PrecisionTox, RISK-HUNT3R. It represents Europe’s largest effort towards ‘the sustainable, animal-free and reliable chemical risk assessment of tomorrow’. These projects contain multiple elements of NAMs and, more importantly, they connect directly with regulatory bodies (5.9, 5.15, 5.16, 5.17). NAMs were clearly on ECHA’s radar as reviewed by Dr Sobański (5.8, 5.14) and in EPA, USA presented by Dr Paul Friedman (5.11). However, the motivation for these interests were slightly different. For ECHA it was the notion that REACH [1]Regulation (EC) No 1907/2006 of the European Parliament and of the Council on the Registration, Evaluation, Authorisation and Restriction of Chemicals (REACH) (Regulation (EC) No 1907/2006) and CLP [2]Regulation (EC) No 1272/2008 on the classification, labelling and packaging of substances and mixtures (CLP Regulation). (Regulation (EC) No 1272/2008) legislation were slightly disconnected from the operational level, while the EPA was more interested in using computational models to predict toxicity. Both groups are interested in speeding up the processes for hazard risk assessment and the prioritisation of chemicals.

Databases and data resources

This workshop was an arena for presenting databases with existing toxicological data that could be used for NAMs. In particular, the CompTox Chemicals Dashboard (comptox.epa.gov/dashboard) and the GenRA module were introduced (5.4). The data in the Dashboard is associated with more than 906k chemicals (as of February 2022) and Dr Paul Friedman demonstrated the practical uses of such databases in EPA research (5.11).

Use of omics in NAMs

In this workshop, omics approaches have been shown to be a powerful tool for grouping, read-across, and for obtaining a mechanistic understanding of a chemical’s MoAs. Prof Viant pointed out that while targeted approaches can be effective, non-targeted approaches can deliver new unknown biomarkers, and argued that both, or either, approaches should be used depending on the regulatory question being asked (5.2).

Multi-omics approaches have also been shown as important in comprehending the holistic picture of the MoAs of chemicals (5.2). These could bring a better understanding of key initiating events (5.2). Further examples were shown by Dr Escher (5.13) and Prof van Water (5.15) in human cell models using transcriptomics. The use of multi-omics was demonstrated by Prof Viant (5.9), where omics-based grouping and read-across was used in a case study with azo dyes.

During the workshop, ECHA presented how omics data are used in their dossiers. Dr Bouhifd (5.10) argued there is still very limited submission of omics data in general. This might be due to the lack of guidance or the reluctance by risk assessors and managers to use this type of information. He stated that the gaps in transparency within data analysis must be removed. More importantly, detailed descriptions of the pathways that link adversities need to be provided, otherwise the promise of mechanistic omics in toxicology risk assessment is not really fulfilled.

Another aspect to be considered is the relevance of the biological system to the endpoint of interest. While omics have great potential for measuring broad biological responses to a given stressor, this response needs to be measured on a model which is known to cover the toxicological space of interest, or there is a risk of it producing misleading data. The relevance of the model to a given stressor needs to be shown before omics approaches are used.

Footnotes

Case studies for NAMs

Several case studies were presented for using NAMs in this workshop. Prof Viant has demonstrated how the omics-based grouping and read-across, using a case study with azo dyes, could be instrumental in predicting MoAs, and could even connect these to apical end points (5.9). Here, mechanistic grouping using multi-omics was a robust method for grouping. Dr Escher presented case studies to demonstrate the integration process of NAMs for a human risk assessment for ‘repeated dose toxicity’ and ‘reproductive toxicity’, within a read-across assessment (5.13). As she demonstrated, based on transcriptomic profiles, the biological similarities of the analogues could be calculated based on the similarity of the gene expression patterns (5.13). Similar ideas were also presented by Prof van de Water (5.15).

State-of-the-art for using NAMs in regulation

During the workshop, NAMs in a regulatory perspective were thoroughly presented and discussed (5.8). Examples of the use of NAMs in regulatory context were given (5.8). Dr Sobański presented the motivation of ECHA to enter the world of NAMs, and their experience in applying NAMs in a regulatory context (5.8). He presented the future vision of ECHA for speeding up regulatory work using these approaches (5.8). The hurdles and gains from regulatory perspectives were thoroughly discussed. ECHA supports several activities via the APCRA initiative and contributes to multiple EU research programmes (e.g. EU-ToxRisk, ASPIS cluster, PARC) (5.8, 5.16).

Challenges in regulatory NAMs

The workshop clearly defined the gap between regulatory practices and state-of-the art approaches for NAMs. Many challenges in the hazard characterisation and assessment in regulatory contexts were identified during the workshop (5.8). Dr Sobański mentioned that although there are provisions in REACH for promoting the development of alternative methods for assessing the hazards of substances there are also significant hurdles to overcome (5.8). In particular, the information requirements in REACH (as well as classification criteria) refer to animal tests, and often to specific OECD in vivo test guidelines, indicated in the REACH Annexes. Regulatory use of NAMs would require the development of Integrated Approaches to Testing and Assessment (IATA) and further extrapolation to human safety assessment.

Needs for training in NAMs for regulators

Throughout the workshop, the regulatory need for training was demonstrated several times. This was shown in prior surveys and polls at different stages of the workshop (3.3, 3.4, 3.8, 5.5, 5.6, and 5.7).

3.5. Conclusions

- This workshop has strengthened the Nordic countries’ knowledge in the use of new methodologies in a regulatory context.

- The workshop raised awareness of the current knowledge gaps among the participants, and identified future training needs to be addressed by ECHA.

- It clearly identified the Nordic needs for capacity building and guidance to be provided by ECHA in the NAM field (especially in read-across and grouping, CLH-dossiers, case studies, and omics).

- The workshop contributed towards increasing the competence among authorities on how to apply NAMs in their regulatory work.

- It emphasised that these methodologies (used to support grouping and read-across approaches in particular) have the potential to replace, reduce and complement the use of traditional animal experiments, and that training is needed now to take full advantage of this.

- Participants were shown how NAMs could be used to support grouping and read-across approaches to reduce animal use, improve hazard assessment, support the evaluation of chemical substitution and deliver hazard information of data-poor substances.

- The workshop improved the Nordic authorities’ capacity to use results from NAMs for drafting new chemical regulations (contributing to the United Nations sustainable development goals relating to good health and clean environment).

- Finally, the workshop identified the need for guidance and additional competence building in how to apply NAMs data.

3.6. Specific recommendations from the workshop

- The identification of a key event may be useful for determining a chemical’s MoAs, but it is recommended that multiple key events tied to the same AOP are identified where possible as this would give far stronger evidence to predict an adverse outcome.

- It is recommended that key events observed at the molecular level should be at the centre of new safety regulations. Thus, generating a shift in focus away from the apical outcomes in experimental animals and towards perturbations of important pathways that lead to toxicity.

- Although molecular biomarkers and targeted gene panels are already part of some current regulatory paradigms, it is recommended that regulators move away from using single molecular biomarkers and towards large panels of biomarkers to predict chemicals’ MoAs.

- The use of untargeted omics is recommended for generating new knowledge about toxicity MoAs as omics can be used in the building of mechanistic models, including AOPs.

- It is also recommended that omics data are incorporated into conventional grouping approaches to compensate for any inconsistencies in the grouping hypothesis.

- A sole reliance on chemical structure and/or physical-chemical properties is not sufficient for robustly grouping chemicals. It is therefore recommended that a variety of molecular data types, including metabolomics and transcriptomics, are to be used for grouping.

- It is recommended that omics are used in bridging the gap between animal models and humans via similar MoAs.

- Further contributions to case studies are recommended, especially in areas where regulatory bodies meet with the scientific communities.

- A need to develop a predictive toxicology roadmap in REACH and CLP that describes a strategy to incorporate NAMs in the regulatory framework was identified.

- It is recommended that advice is provided in the guidance documents for REACH and CLP relating to omics and NAMs to ensure that they cover the topics most relevant to regulators.

- It is recommended that guidelines are provided for analysing and reporting omics data to ensure reproducibility and transparency between different labs for grouping. Any steps in the data analysis that lack transparency must be removed.

- It is recommended that workflows are established to enable molecular mechanistic data to be used for grouping approaches and prioritisation.

- The routine use of biologically based grouping alongside structure-based methods is also recommended.

- The submission of omics data by REACH registrants and assessors should be encouraged, and one way of achieving this would be by providing assessors with more guidance on this topic.

- Further research into the construction of detailed descriptions of molecular pathways that link to bioactivity and adversities is recommended.

- It is recommended that further training is provided in the use of NAMs with particular focus on groups of experts working together to encourage knowledge sharing and increase confidence.

3.7. Ongoing and follow-up NAMs activities

- From the survey and the workshop polls it is evident that more training and hands on examples are warranted (3.3, 3.4, 3.8, 5.5, 5.6, and 5.7).

- The omics reporting framework project is under development by OECD and involves the development of guidance documents for consistent reporting of omics data from various sources (5.2, 5.3). The project aims to develop a framework for the standardisation of reporting of omics data generation and analysis, to ensure that all of the information required to understand, interpret and reproduce an omics experiment and its results are transparent and available (FAIR principle).

- Ongoing OECD activities on omics: oecd.org/chemicalsafety/testing/omics.htm (5.6).

- ECHA is involved in multiple ongoing NAMs related projects aiming to translate NAMs into regulatory applications (5.8, 5.14)

- The APCRA initiative and consortium case studies, contributing to multiple EU research programmes (e.g. EU-ToxRisk, ASPIS cluster, PARC, 5.16)

- Involvement in implementation of the EU Chemical Strategy for Sustainability (CSS), using ToxCast/Tox21 assays and QSAR predictions under Group Management Team (GMT), and its contribution to the development and adoption of Defined Approaches

- Contributions to OECD Expert groups working on Transcriptomics and Metabolomics Reporting Framework and QSAR assessment framework to revise principles and establish an assessment framework.

- Promotion of NAMs via training about NAMs for ECHA staff and committees, industry, and the scientific community.

- The EU-ToxRisk project aims to deliver read-across procedures and to establish the starting point for hazard and risk assessment strategies for chemicals of scarce information (5.15)

- Multiple projects aim to use and improve the AOP framework as a departure point for NAMs (5.16).

- Michabo Health Science Ltd invited workshop participants (i.e. regulatory bodies) to collaborate on the grouping and read-across case studies (5.17).

3.8. Workshop evaluation

At the end of the workshop an online evaluation was held to collect the views of the participants on the overall quality of the workshop, the extent to which the workshop aims were met, the extent to which the information provided in the workshop will be useful in their future work, etc.

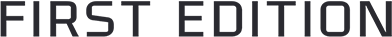

Overall, the participants were very satisfied with the workshop (average rating 4.4/5, Figure 1A), and most participants considered the workshop to be useful in their future work (Figure 1B). The majority of the participants (90%) identified a need for future training and/or guidance in the use of NAMs (Figure 1C), which included training and case studies on biostatistics, bioinformatics, and omics technologies for the grouping and read-across of substances for regulatory purposes as well as more training in omics technologies in general.

Figure 1. Results from the workshop evaluation A) How satisfied are you overall with the workshop? B) To what extent do you expect that the outcome of the workshop will be useful in your future work? C) Do you have any future needs for training and/or guidance in the use of NAMs

4. Biographies of the speakers

Dr Mounir Bouhifd is currently a regulatory officer at the European Chemicals Agency. He is member of the Alternative Methods Team of the computational Assessment unit. Part of his tasks is on the assessment of QSAR predictions. NAMs are another area of his work. Mounir was working on the development and application of Alternative methods and especially their validation, at the European Centre of Validation of Alternative methods (ECVAM). He was also a faculty member at the Johns Hopkins university.

Prof John K. Colbourne holds the inaugural Chair of Environmental Genomics at the University of Birmingham (UK). He is also an Adjunct Professor at the Mount Desert Island Biological Laboratory (USA), Guest Professor at Hebei University (China), co-founder and CSO of Michabo Health Science Limited, and co-founder of the international Environment Care Consortium (ECC), and of the Solve Pollution Network. This year, he incorporates the Environment Care Foundation (USA) as the registered charitable arm of the ECC. Previously, he served as genomics director of the Centre for Genomics and Bioinformatics at Indiana University (until 2012) receiving research funding from the U.S. NSF, NIH and DOE to help pioneer the application of genomics for the study of environmental health, primarily using the freshwater crustacean Daphnia - an evolutionary, ecological, and toxicological model system. This work resulted in Daphnia's designation as a biomedical model species by the U.S. National Institutes of Health and receiving the Royal Society Wolfson Research Merit Award. His research in Birmingham receives funding from NERC, BBSRC, Royal Society, FSA, U.S. NIEHS, and the European Commission that is focused on the application of genomics for environmental health protection by spearheading Precision Toxicology for obtaining comprehensive knowledge on the effects of synthetic chemicals and environmental pollutants on biology, using new and established genomic model species. He also serves on the UK Government’s Hazardous Substances Advisory Committee (HSAC), which provides expert advice on how to protect human health and the environment from potentially hazardous substances.

Dr Hubert Dirven is a leader of the Chemical Toxicology Unit, at the Norwegian Institute of Public Health, and he is involved in hazard and risk assessment for REACH chemicals and for chemicals in food. He is also involved in many of EU research project such as ONTOX, POLYRISK, EXIMIOUS and PARC. Hubert has previously worked as a toxicologist in the pharmaceutical industry.

Dr Sylvia E. Escher joined the Fraunhofer Institute of Toxicology and Experimental Medicine in Hanover in September 2006, where she currently leads the in silico toxicology department in the field of human risk assessment. Her interests include the development and maintenance of toxicological databases such as RepDose, and their use to develop and improve human risk assessment methods. Examples include the development of NAM supported read-across approaches and the TTC concept. Her team is currently developing AOPs for pulmonary fibrosis and a PBK model addressing in particular the integration of in vitro ADME properties of airborne compounds. She has published about >100 peer-reviewed articles, abstracts and book chapters.

Dr Katie Paul Friedman joined the Center for Computational Toxicology and Exposure in the Office of Research and Development at the US EPA in August 2016, where she is currently focused on application of NAMs to chemical safety assessment, with additional interests in uncertainty in alternative and traditional toxicity information, endocrine bioactivity and developmental neurotoxicity prediction, and in vitro kinetics. One of her roles in the Center is to run the ToxCast programmes. Previously, Dr Paul Friedman worked as a regulatory toxicologist at Bayer CropScience with specialties in neuro-, developmental and endocrine toxicity, and predictive toxicology. She has been actively involved in multi-stakeholder projects to develop AOPs, alternative testing approaches, and the regulatory acceptance of NAMs. Her laboratory background includes development of high-throughput screening assays, the combined use of myriad in vitro and in vivo approaches, including receptor-reporter and biochemical assays, primary hepatocyte cultures, and targeted animal testing paradigms, to investigate the human relevance of thyroid and metabolic AOPs using probe chemicals. Dr Paul Friedman received a Ph.D. in Toxicology from the University of North Carolina at Chapel Hill.

Dr Grace Patlewicz is currently a research chemist at the Center for Computational Toxicology & Exposure within the US EPA. She started her career at Unilever United Kingdom, before moving to the European Commission’s Joint Research Centre in Italy and then to DuPont in the United States. A chemist and toxicologist by training, her research interests have been focused on the development and application of QSARs and read-across for regulatory purposes. She has authored ~135 journal publications and book chapters, chaired various Industry groups and has contributed to the development of technical guidance for QSARs, chemical categories, and AOPs under various OECD work programmes.

Dr Magda Sachana is an Administrator within the Environment Health and Safety Division of the OECD’s Environmental Directorate since 2015. She manages the development and implementation of policies in the field of chemical safety and contributes to the OECD Test Guidelines, Pesticide and Hazard Assessment Programmes. Dr Sachana among other projects is coordinating the OECD project on omics reporting frameworks.

Dr Tomasz Sobański is a Team Leader for Alternative Methods Team at the European Chemicals Agency. He is working at ECHA for over 12 years focusing at development and application of the alternative methods in the regulatory processes. For many years he was a project manager of the OECD QSAR Toolbox while recent years he dedicated to NAMs and its applications in regulatory science. Tomasz co-authored over 30 publications and book chapters.

Mark R. Viant is Professor of Metabolomics at the University of Birmingham, UK, Executive Director of Phenome Centre Birmingham – a centre specialising in toxicometabolomics, and co-Founder/CEO of Michabo Health Science Ltd. He is also a past President of the International Metabolomics Society. His research focuses on developing and applying metabolomics in the field of human and environmental toxicology, with the goal to find novel molecular mechanistic solutions for industry and regulators in chemical safety science. He co-led the Ecetoc MEtabolomics standaRds Initiative in Toxicology (MERIT) project, and currently co-leads the omics activities within the OECD’s chemical safety programme and leads the Cefic MATCHING international ring-trial in toxicometabolomics. Mark has co-authored over 180 publications and his work has been recognised by the award of a 2015 Lifetime Honorary Fellowship of the International Metabolomics Society.

Bob van de Water is Professor of Drug Safety Sciences at the Leiden Academic Centre for Drug Research at Leiden University in the Netherlands. He has worked on molecular mechanisms of toxicity for over 30 years. His toxicogenomics research led to the discovery of biomarkers that have been integrated in fluorescent reporter test systems to qualify and quantify adverse cellular stress responses in relation to genotoxicity and severe cell injury. He was the coordinator of the Horizon2020 EU-ToxRisk project that focussed on mechanism-based testing strategies for read across. He currently coordinates the Horizon2020 RISK-HUNT3R project that will focus on NGRA strategies in the context of ab initio testing. Finally, he will be task leader in the Horizon Europe PARC programmes.

Dr Antony J. Williams joined the Center for Computational Toxicology and Exposure in the Office of Research and Development at the US EPA in May 2015. His interests include the aggregation and curation of chemical data, delivery of the center’s data to the scientific community, development of models to support physicochemical property prediction and development of software approaches to support non-targeted analysis. While his PhD is in Nuclear Magnetic Spectroscopy he moved into the field of cheminformatics and chemical information management over two decades ago. His focus has been on internet-based projects to deliver free-access community-based chemistry websites. He was one of the co-founders of ChemSpider that he started as a hobby project and is now hosted by the Royal Society of Chemistry. He is widely published with >300 peer-reviewed articles, book chapters and books.

5. Summary of presentations

5.1. Introduction to New Approaches Methodologies (NAMs)

By Prof John K. Colbourne

In his introductory talk, Professor John K. Colbourne presented various definitions of NAMs used by regulatory toxicologists. He has shown which technologies shaped the development of NAMs, and how legislative bodies played (and still play) a role in promoting the use of NAMs towards 21st century risk assessment. He conveyed how molecular toxicology can deliver mechanistic data, and how the molecular data can predict adversity. He described approaches used in comparative biology for the cross-species extrapolation of risk assessment results, advocating tiered approaches with both model organisms as well as cells.

New Approach Methodologies (NAMs) in toxicology

With the advent of NAMs, entering the portfolio of tools in regulatory processes, Prof Colbourne suggested that their effective uses in safety assessment may likely require a fundamental shift in our understanding of toxicology by focussing on MoAs. This will facilitate meaningful decisions around the hazards and risks of exposure to chemicals for human health and environment.

Definitions of New Approach Methodologies (NAMs)

The definition of NAMs is still developing and there were three working definitions provided at the workshop: I) ECHA regulators define NAMs as any approach for chemical hazard and risk assessment which can significantly contribute to throughput, robustness, and mechanistic knowledge, and which provide appropriate protection levels for human health and the environment. II) The UK Committee on Toxicology and Chemicals defines NAMs as high throughput screening omics and in silico computer modelling strategies. This also includes machine learning (with Artificial Intelligence) for the evaluation of hazard exposure. It advocates for the Replacement, Reduction, and Refinement of animal testing (the 3 Rs) approach. III) The USA EPA more broadly defines NAMs as any technology, methodology or approach, or combination that provides information on chemical hazard or risk assessment to avoid the use of animal testing, for instance omics derived NAMs. US priorities have also been set to reduce animal testing and to practically eliminate it by the year 2035.

The main legal instruments to avoid animal testing today

Today the testing proposals and third-party consultations require animal testing and reporting according to REACH (Regulation (EC) No 1907/2006) in EU. There exist five adaptations (NAMs) that have been used by industry when registering their compounds in their dossier for reporting under REACH. These include 1) the use of existing data, 2) the use of weight-of-evidence approaches, 3) information generated through quantitative structure activity relationships (QSARs), 4) in vitro test methods and 5) groupings of substances and read-across methods. Prof Colbourne showed that the grouping of substances and read-across methods are the most predominant form of NAMs used in the registration of chemicals today.

Paradigm of moving away from animal testing

The modern paradigm of moving away from animal testing was conceived in 2010, with a ground-breaking report that created the modern approach to performing hazard assessment [1]Gibb, S., Toxicity testing in the 21st century: a vision and a strategy. Reprod Toxicol, 2008. 25(1): p. 136–8.. It was commissioned in the United States by the National Academy of Sciences, Engineering and Medicine to address the backlog of chemical safety testing. It proposed an increase in throughput and the reduction of costs by utilising a mechanistic understanding of chemicals’ MoA.

This approach is designed specifically to assess the risk to humans posed by exposure to chemicals under the increased social demand to reduce or eliminate the use of animals (mammalian species in particular) for chemical safety testing. This is motivated by a desire to move away from making direct observations of adverse health effects (including the deaths of the animals used for toxicity testing), towards new, less harmful methodologies. These new methods are now enshrined in law and will likely continue to expand in the same way that animal testing laws have done for cosmetics.

The fundamental criteria that have been set by this report include, developing a more robust scientific basis for assessing the health effects of chemicals, providing a broad coverage of chemicals, chemical mixtures, outcomes, and life stages, reducing the cost and time of testing, and basing the decisions on human rather than rodent biology with more focus on relevant dose levels.

Prof Colbourne questions whether toxicity at environmentally relevant doses can truly be inferred from toxicity testing results at high doses and whether the toxicity observed in a mouse is truly predictive of the toxicity in humans. The proposed alternative solution to predict toxicity in humans is to measure the process of how exposure to chemicals perturbs normal biological functions, and to test a broad range of doses that set toxicity thresholds as points of departure (PODs) along the cellular response pathways that may cause adverse outcomes. This recommends that regulation refocuses hazard assessments on toxicity processes and MoAs expected to include early molecular changes, instead of on prescribed apical endpoints (such as animal deaths or reproduction outcome).

Toxicity as a biomolecular process

Prof Colbourne stressed that by recognising toxicity as a biomolecular process, regulatory toxicology can focus on understanding the potential harmful effects of chemicals at the level of the toxicity relevant pathway, which is defined here as a molecular response that, when sufficiently perturbed, is expected to result in an adverse health effect.

Proposals of the report

When this report was commissioned [2]Gibb, S., Toxicity testing in the 21st century: a vision and a strategy. Reprod Toxicol, 2008. 25(1): p. 136–8., there were different options for modern toxicity testing strategies that had been investigated by the National Academies. These were presented in detail by Prof Colbourne.

The report concluded that a combined tiered approach to toxicity testing (in vitro, in vivo, and in silico) may be the best option to modernise testing strategies in the near future. A combined approach, using both cell culture and whole organism testing would meet many criteria for new approaches discussed in the report. Mammalian systems are used only when compounds are likely to trigger pathways of unknown MoAs or have been flagged as potentially hazardous by toxicity pathway screening.

The proposed approach is intended to diversify the applicability of toxicity testing data, and to deliver a weight-of-evidence approach to risk assessment. This includes the systemic development of a tiered decision tree, selection of data, and evidence of toxicity from a limited suite of animal tests.

Weight-of-evidence refers to an approach that 1) Characterises chemicals based on their structure and function 2) Provides additional evidence of toxicity relating to hazards, and 3) Provides more data for dose response and extrapolation modelling. At each of these steps, the population base and human exposure data may be considered. In the end, the chemical regulator decides what data are needed for decision making but, at its centre, it is based on the knowledge of a chemical’s pathway.

The report proposes that tier one be exclusively focused on the evaluation of perturbations of pathways leading to toxicity rather than apical endpoints and may be done almost entirely in vitro. It also emphasises use of high-throughput approaches or cell lines of human origin, and to use medium throughput in vivo assays.

Paradigm shift with Tox21 programmes

This report led to a ground-breaking science programme in the United States called Tox21, which expanded on the report by recognising that chemical exposure experiments should be conducted on a broader range of animal species both at the cellular and organismal levels. The rationale for this is that chemical perturbations of the critical pathways are likely fundamental to animal biology including humans. The use of biomedical model species, such as zebrafish, fruit fly, or nematode worms, can improve throughput due to their small size and rapid reproduction rate. Furthermore, these species are regarded as non-sentient, and therefore not considered animals in a legal context. Therefore, greater throughput and the reduction of animal testing is achieved and toxicity testing on in vivo systems is used only to compensate for the drawbacks of in vitro research.

Transcriptomics and metabolomics

Prof Colbourne proposed that transcriptomics (quantitative measures of gene expression) and metabolomics (quantitative measures of metabolites) have the potential to reveal the chemical MoAs. With a large enough data set these leads to computational approaches that will either directly predict the effects of a chemical on human biology or prioritise the tests that need to be done on animals.

The use of in vitro testing is limited for detecting systemic toxicity. The physical behaviours of large multicellular networks and the interactions between diverse cells, tissues and organ types are complex making it impossible to gain a more complete understanding of the toxicity of a chemical from in vitro tests alone. Some of these drawbacks of in vitro tests can be overcome by testing on a suite of model species.

Footnotes

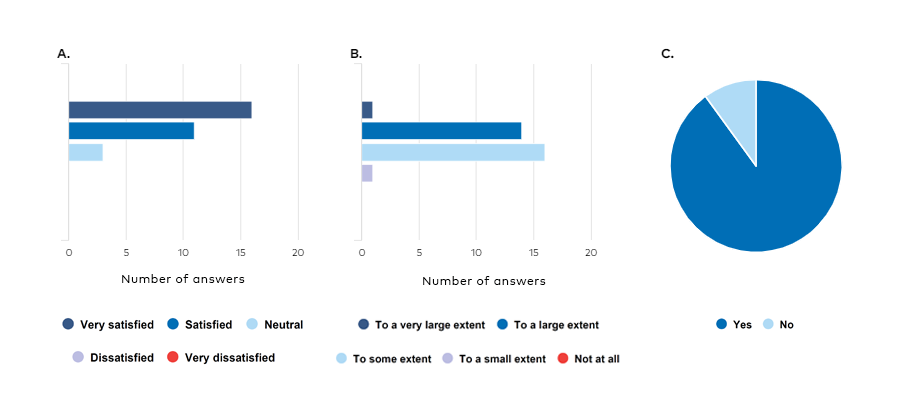

Figure 2. Adverse Outcome Pathways (AOPs) presented by John K. Colbourne after visualisation by Maurice Whelan (European Union Reference Laboratory for alternatives to animal testing (EURL ECVAM) at the JRC (Joint Research Centre of the European Commission), Italy, modified by Marcin W. Wojewodzic.

A mechanistic understanding of a specific toxicity pathway can be gained by analysing the key events that lead to AOPs for that chemical group (Figure 2). The utility of NAMs is expected to grow alongside the development of molecular key event databases, which connect the molecular signatures of toxicity to the associated chemical perturbations affecting all species, including humans.

The AOP is a flexible framework, centred around the key events. Prof Colbourne advocates that NAMs using early molecular key events in pathogenesis could be the future for risk assessment. Using this method, the observation of an organism's death or reproductive failure may no longer be necessary.

Key events are quantifiable as they are the fundamental changes in the biological condition of an organism. Key events set up the conditions leading to the occurrence of future downstream events, meaning that they, ultimately, are predictive of adverse outcomes. Furthermore, the observation of multiple key events associated with a given adverse outcome statistically improves the probability that that outcome will happen.

In the chain of key events leading to an adverse outcome, there is an initial earliest point (the molecular initiation event). This is the point at which biology intersects with chemistry. The molecular initiation event is a direct compound interaction with the biological molecules which ultimately leads to the adverse outcome. These sequences of key events are linked together across various levels of biological organisations through what are called key event relationships. The key event relationships are direct relationships that describe how one key event leads to another.

Finally, the adverse outcome is the furthest downstream key event in the chain. The adverse outcome is the key event that should determine the regulatory classification given to chemicals known to cause a corresponding molecular initiation event. Prof Colbourne argued that an improved understanding of key event chains will improve the ability of regulatory bodies to make decisions based on a better understanding of a given chemical’s MoA.

Prof Colbourne concluded by demonstrating an existing AOP that has been verified and accepted for skin sensitization. He reflected upon various areas of biological complexity at the molecular level, at the cellular level, at the organ level, and finally, at the whole organism level.

Conclusions

NAMs are a well-timed approach to toxicology because, ever since the implementation of REACH legislation, there's been a drive to step away from the intensive testing on animals to determine toxicity. There are some significant legal changes that have been recently put into place to reduce animal testing and accelerate NAMs development.

Identification of a key event may be useful for determining a chemical’s MoA, but the identification of multiple key events that are all tied to the same AOP gives far stronger evidence to predict an adverse outcome.

The regulatory context of 21st century toxicology is going to be a shift in focus away from the apical outcomes in experimental animals and towards these important perturbations of pathways leading to toxicity. The key events (most importantly, key events observed at the molecular level) and the development of risk assessment practices based on pathway perturbations will be at the centre of these changes. This will lead to the reinterpretation and rewriting of regulatory statutes under which risk assessments are conducted.

Questions, comments, and discussion

The public was interested to know how Prof Colbourne perceives the role of invertebrates in toxicology, when interpolating to human health. Prof Colbourne argued that by avoiding the use of mammals and fish for toxicological testing, we are pushed towards the use of invertebrates. In vitro methods can replace some in vivo testing, however there are still good reasons to observe the systemic perturbation of biological phenomena that are broadly shared by all animals. Prof Colbourne argued that research indicates that most of the pathways that are relevant to human health emerged very early in animal evolution. Therefore, he advocated invertebrates are viable substitutes to vertebrates for hazard assessment in terms of the identification of perturbed pathways. Many conservative pathways are shared with invertebrates as well.

5.2. Introduction to omics

By Prof Mark R. Viant

As an introduction to omics, Prof Viant focused his talk on molecular biomarkers, going from measuring single biomarkers to panels of thousands of biomarkers in a regulatory context. His presentation aimed to demystify the omics technology.

Single molecular biomarkers

OECD plays an important role in developing test guidelines and in the mutual acceptance of data. An accepted OECD guideline will be recognised by all 38 member states of the OECD. The OECD test guideline 408 repeated dose 90-day oral toxicity study in rodents was recently revised placing a greater emphasis on endocrine disruption. Prof Viant used endpoints in this revised guideline as a way of introducing molecular biomarkers, referring to the endogenous molecular metabolites thyroxine (T4) and triiodothyronine (T3). Their role in a mechanistic toxicity pathway is well understood, as they are responsive to thyroid pathway perturbation. Being included as a required endpoint in a standardised OECD test guideline, demonstrates that molecular biomarkers are in fact a part of the current regulatory paradigm.

Panels of molecular biomarkers

Another example presented by Prof Viant was an in vitro assay predicting skin sensitisation, the GARD®skin, which is under consideration for OECD test guideline programmes TGP 4.106. In contrast to assays built on single molecular biomarkers, this assay is based upon the readout of 200 genes. The GARD test methods make use of a machine learning algorithm (Support Vector Machine) to process genomic data. Such targeted assays that are measuring a molecular key event, whether it be metabolites or genes, are predicting a specific MoA. The next step is the concept of grouping assays together, to measure larger panels of biomarkers and be able to predict a broader range of MoAs. In 2018, US National Toxicology Program [1]Mav, D., et al., A hybrid gene selection approach to create the S1500+ targeted gene sets for use in high-throughput transcriptomics. PLoS One, 2018. 13(2): p. e0191105. published a gene biomarker panel with >1500 genes. Another example is the MTox700 metabolite biomarker panel predicting 722 human relevant metabolites associated with toxicity, adverse outcomes, and disease [2]Sostare, E., et al., Knowledge-driven approaches to create the MTox700+ metabolite panel for predicting toxicity. Toxicol Sci, 2022..

Demystifying omics

Omics techniques aim to characterise and quantify biological molecules that translate into knowledge about the functioning of an organism. Transcriptomic, metabolomic, proteomic, genomic, epigenomics are the most common omics techniques used today. Measuring a large panel of predefined molecular biomarkers that cover a broader range of perturbations, i.e. measuring characterised MoAs, is referred to as targeted omics. However, this approach will only measure what is already known today and may miss chemically induced biologically relevant effects that are yet unknown. To address this issue, the next level is therefore the application of untargeted omics, exploring both characterised and uncharacterised MoAs and providing broader views of the toxicological response to a chemical. Untargeted omics includes measuring all possible genes or metabolites, not just those that are associated with a particular MoA.

It is a general point of view that there is a unidirectional travel of information from the genome through the transcriptome. The word transcriptome refers to expressed genes (mRNA), the proteome refers to all proteins, and the metabolome refers to all metabolites. To study effects of chemicals on biological systems, Prof Viant emphasised the importance of using the powerful combination of expressed genes and metabolic biomarkers (multi-omics approach), to reduce uncertainty and increase confidence in the prediction of an adverse outcome.

From mechanistic data towards regulatory application

The apparent limitation of any assay that measures a single MoA is that many individual assays are needed to cover a wide range of MoAs in a hazard or risk assessment. Nevertheless, it is a good starting point. From the previous examples, we know that the use of molecular biomarkers and targeted gene panels are already in use, accepted or under consideration in regulatory paradigms. The use of untargeted omics is still at an early stage, at least in the context of regulatory toxicology, and there are challenges that need to be addressed, including improved mechanistic anchoring of the omics data.

Finally, Prof Viant highlighted that molecular biomarker assays are built on previous knowledge of biochemical pathways in toxicology. Gene assays like GARD®skin, however, originated from an untargeted omics study and the use of machine learning approaches to identify the 200 genes included in the assay. Thus, another role of omics will be to discover biomarkers in the first place.

Conclusions

Molecular biomarkers and targeted gene panels are already part of the current regulatory paradigm. Moving from single molecular biomarkers to panels of thousands of biomarkers, enables a broader prediction of MoAs. The application of untargeted omics, exploring uncharacterised MoAs and providing an even more comprehensive view of the toxicological response to a chemical, is promising but still at an early stage in the context of regulatory toxicology.

Footnotes

- ^ Mav, D., et al., A hybrid gene selection approach to create the S1500+ targeted gene sets for use in high-throughput transcriptomics. PLoS One, 2018. 13(2): p. e0191105.

- ^ Sostare, E., et al., Knowledge-driven approaches to create the MTox700+ metabolite panel for predicting toxicity. Toxicol Sci, 2022.

5.3. Towards regulatory applications of molecular mechanistic data

By Prof Mark Viant

The focus of Prof Viant’s talk was on grouping and read-across using molecular mechanistic data. He also gave a brief introduction to the determination of potency via benchmark dosing.

Concept for grouping using molecular mechanistic data

It has been pointed out that a sole reliance on chemical structure is not enough for grouping of chemicals. Prof Viant started his presentation introducing the concept for the grouping of chemicals using molecular mechanistic data. Firstly, the transcriptome is condition dependent, and chemicals acting via different MoAs induce different sets of gene biomarkers. This implicates that those chemicals acting via the same MoA should induce similar gene biomarkers. The concept can also be applied to metabolomes, which are also condition dependent, i.e., chemicals acting via different MoAs induce different metabolic biomarkers, suggesting that chemicals acting via the same MoA should induce similar metabolic biomarkers. However, the thought of using molecular mechanistic data in grouping is not new. The term bioprofiling, which includes transcriptomics and metabolomics, has already been used by OECD (and others) in existing guidance documents for grouping and read-across[1]OECD, Guidance on Grouping of Chemicals, Second Edition. 2017..

Deficiencies

The ECHA report on alternatives to testing on animals for the REACH Regulation[2]ECHA, The use of alternatives to testing on animals for the REACH Regulation. Fourth report (2020) under Article 117(3) of the REACH Regulation. 2020. addresses shortcomings in grouping and read-across. The most common shortcomings include the lack of, or low quality of, supporting data, and limitations in the hypothesis and justification of the toxicological prediction. The report specifically mentioned that to increase robustness and regulatory acceptance for human health endpoints, additional data is needed, particularly related to toxicological mechanism and ADME properties. Furthermore, it is suggested that NAMs (for example high-throughput in vitro screening) have the potential to further substantiate the hypothesis of read-across approaches. Prof Viant underlines the word ‘potential’ to remind everyone that there are still several issues to be resolved.

How to bring molecular mechanistic data into grouping and read across?

According to Prof Viant, bringing molecular mechanistic data into the procedure does not involve a fundamental change in the grouping and read-across paradigm. New data in terms of molecular biomarkers would be added to the multi-step process of grouping and read-across to build confidence in the formation of the group or category, while the rest of the paradigm stays unchanged. As an example, the process could involve the measurement of gene expression and metabolites to characterise biological response to chemical exposure, calculating the similarity of the gene expression and metabolites between each pair of chemicals, and visualising the similarities to form groups of chemicals. Several papers have been published on this approach in recent years [3]Nakagawa, S., et al., Grouping of chemicals based on the potential mechanisms of hepatotoxicity of naphthalene and structurally similar chemicals using in vitro testing for read-across and its validation. Regulatory Toxicology and Pharmacology, 2021. [4]Sperber, S., et al., Metabolomics as read-across tool: An example with 3-aminopropanol and 2-aminoethanol. Regulatory Toxicology and Pharmacology, 2019..

Determination of potency via benchmark dosing

Benchmark dose (BMD) modelling is used to estimate the point of departure to define a safe dose. Increasingly, this approach has been applied to molecular data, looking at the level of a particular molecular response as a function of dose. Prof Viant gave an example using a molecular biomarker associated with energy metabolism to illustrate the possibility to estimate potencies.

Conclusions

There is wide acceptance that a sole reliance on chemical structure and/or physical-chemical properties is insufficient for robustly grouping chemicals. Different types of molecular data, including metabolomics and transcriptomics, can be used to provide confidence to grouping and in principle enable a more reliable read-across. Metabolomics data has previously been submitted to ECHA to support read-across and data gap filling for 3-aminopropan-1-ol.

Footnotes

- ^ OECD, Guidance on Grouping of Chemicals, Second Edition. 2017.

- ^ ECHA, The use of alternatives to testing on animals for the REACH Regulation. Fourth report (2020) under Article 117(3) of the REACH Regulation. 2020.

- ^ Nakagawa, S., et al., Grouping of chemicals based on the potential mechanisms of hepatotoxicity of naphthalene and structurally similar chemicals using in vitro testing for read-across and its validation. Regulatory Toxicology and Pharmacology, 2021.

- ^ Sperber, S., et al., Metabolomics as read-across tool: An example with 3-aminopropanol and 2-aminoethanol. Regulatory Toxicology and Pharmacology, 2019.

Towards regulatory application of molecular mechanistic data – part II

By Prof John K. Colbourne

Prof Colbourne gave a talk about detecting chemicals MoA and how to extrapolate across species by embracing the genetic similarities and differences in an evolutionary context.

Genetic similarities and differences across species

Most of our traits are shared with other animal species, inherited from common ancestors driven by a long history of animal evolution. An important question is to what extent can we rely on genomic observations made in invertebrates to predict the health effects of chemical hazards in humans? If genes are in fact responsible for coding elements that are disrupted by chemicals and therefore leading to toxicity, toxicology can focus cross-species extrapolation of toxicity pathways based on the knowledge of the evolutionary conservation of genes and their functions (comparative biology of animal genomes). Genes that are most strongly linked to adverse outcomes are disproportionately shared among all animals including humans. Differences among species can either be qualitative or quantitative; Qualitative differences are attributed to gains or losses of traits, including pathways relevant for toxicity, while quantitative differences can be attributed to genetic variation, either segregating within or among population of the same species, or non-segregating genetic variation among species.

Chemical modes of action (MoAs) and extrapolation across species

Prof Colbourne presented three examples using existing data on how chemical MoAs is detected and extrapolated across species by embracing the genetic similarities and differences that we have in our evolutionary history.

In the first example it was demonstrated how qualitative differences, in terms of metabolic pathways diverge among species. The benzoyluera class of insecticides are designed by industry to inhibit chitin synthesis. Insects have a chitin exoskeleton whereas vertebrates do not, and this rational was used to design this class of insecticides. The toxicity of this insecticide should be dependent upon whether or not an animal species contains a chitin synthesis pathway in its genome. However, systematic review of literature on the sequencing of animal genomes including humans, other vertebrates and invertebrates revealed that the chitin synthase gene and the pathway originated by an ancestor to both vertebrates and invertebrates at the base of the animal phylogeny. However, by evolutionary chance, the chitin synthase gene was lost in the branch leading to humans and other mammals. Prof Coulbourne referred to the fact that these insecticides is not predicted to be toxic to humans as an evolutionary “flip of the coin”.

In the second example, Prof Colbourne demonstrated quantitative differences in terms of rate variation in metabolic pathways. The metabolism of certain compounds is dependent on the presence or the absence of the pathway itself, or by the varying efficacy of the enzymes that are encoded by variation found among animal genomes including the human genome.

The third example referred to how genetic variation could influence toxicity. Genetic variation within and among animal population (including humans) control processes that affects exposure, dose, toxicokinetics and toxicodynamics. For example, a simple amino acid substitution may radically alter the efficiency of an enzyme and hence influence toxicity. In a case study, it was demonstrated how genetic variation in human hepatocytes from eight individuals affected the capacity to metabolise and detoxify inorganic arsenic.

Based on these examples, Prof Colbourne emphasises that safety testing on animals, exclusively based on observed apical endpoints can be either protective of human health or misleading, depending on the test animal and its genetic basis for toxicity. NAM approaches may be helpful in terms of having a more nuanced understanding of the risks that chemicals pose to humans.

Finally, Prof Colbourne talked about prediction of liver toxicity and MoAs using metabolomics of in vitro HepG2 cells [1]Ramirez, T., et al., Prediction of liver toxicity and mode of action using metabolomics in vitro in HepG2 cells. Arch Toxicol, 2018. 92(2): p. 893–906.. Here, compounds were grouped according to their presumed molecular MoA.

Conclusions

In conclusion, Prof Colbourne advocates that omics can redirect testing to achieve the following:

- Improve certainty in selecting critical endpoints through building and supporting mechanistic information

- Enhance understanding of whether adverse effects observed in animals are likely to occur in humans via similar MoAs /molecular key events

- Guide in selecting appropriate risk assessment approaches (such as threshold/quantitative or non-threshold/qualitative approaches)

- Support chemical grouping and read-across

Footnotes

- ^ Ramirez, T., et al., Prediction of liver toxicity and mode of action using metabolomics in vitro in HepG2 cells. Arch Toxicol, 2018. 92(2): p. 893–906.

Guidance document for consistent reporting of omics data from various sources

By Prof Mark Viant

The omics reporting framework project is being conducted by OECD and involves the development of guidance documents for consistent reporting of omics data from various sources. The goal is to develop a framework for the standardisation of reporting of omics data generation and analysis, to ensure that all of the information required to understand, interpret and reproduce an omics experiment and its results are available. Prof Viant emphasised that the purpose is to ensure that sufficient information is available to enable an evaluation of the quality of the experimental data and interpretation, and support reproducibility, but not to stipulate the methods of data analysis or interpretation.

Covering the two omic approaches, the transcriptomics reporting framework (TRF) led by Joshua Harrill (USEPA) and Carole Yauk (formerly of Health Canada, now at the University of Ottawa), and the metabolomic reporting framework (MRF) led by Prof Viant (University of Birmingham, UK) are integrated in this work. Both the TRF and MRF are harmonised, providing a reporting template and a narrative guidance. In connection to that, Prof Viant promoted the paper by [1]Harrill, J.A., et al., Progress towards an OECD reporting framework for transcriptomics and metabolomics in regulatory toxicology. Regul Toxicol Pharmacol, 2021. 125: p. 105020. on the progress towards an OECD reporting framework for transcriptomics and metabolomics in regulatory toxicology.

To improve the guidance document, extensive trialling has been conducted via case studies with ‘data submitter’ and ‘end user’ teams, with comparison of the two sets of results in a concordance analysis. Moreover, trials are ongoing in a Cefic-funded project to demonstrate that multiple labs, each analysing and reporting omics data from a single toxicity study, can arrive at the same conclusion for grouping eight chemicals (cefic-lri.org/projects/c8-assessing-the-repeatability-of-metabolomics-within-a-regulatory-context-through-a-multi-laboratory-ring-trial/). This multiple lab ring-trial will also use the new MRF reporting framework.

Footnotes

- ^ Harrill, J.A., et al., Progress towards an OECD reporting framework for transcriptomics and metabolomics in regulatory toxicology. Regul Toxicol Pharmacol, 2021. 125: p. 105020.

5.4. An introduction to EPA CompTox Dashboard and GenRA Read-Across module

By Dr Antony J. Williams and Dr Grace Patlewicz

Vision of using CompTox for NAMs in computational toxicology

The vision of the computational toxicology research methods (NAMs) is to speed up prioritisation, and to decrease time and costs for chemical hazard evaluation. Dr Williams and Dr Patlewicz, introduced the publicly accessible CompTox Chemicals Dashboard (https://comptox.epa.gov/dashboard), followed by hands-on demonstrations in using this resource for different applications. The Dashboard provides public access to many of the databases developed by the Center for Computational Toxicology and Exposure at the US Environmental Protection Agency, as part of their Chemical Safety for Sustainability Research Program. It represents data generated and assembled over the last 20 years, initiated with the DSSTox database [1]Richard, A.M. and C.R. Williams, Distributed structure-searchable toxicity (DSSTox) public database network: a proposal. Mutat Res, 2002 499(1): p. 27–52., with an overarching goal to deliver a computational toxicology platform where the innovation of alternative ways of evaluating chemicals for health risks can flourish.

Overview of the CompTox

The data in the dashboard is associated with more than 906k chemicals (as of February 2022). Significant efforts are expended in manual curation of the data, as exemplified by the investment in curating and expanding the DSSTox chemistry data [2]Grulke, C.M., et al., EPA's DSSTox database: History of development of a curated chemistry resource supporting computational toxicology research. Comput Toxicol, 2019. 12.. However, multiple other databases integrate into the Dashboard including, but not limited to, the invitrodb database associated with ToxCast (epa.gov/chemical-research/exploring-toxcast-data-downloadable-data) and data associated with exposure predictions (epa.gov/chemical-research/rapid-chemical-exposure-and-dose-research). The dashboard contains information about individual assays from ToxCast/Tox21 studies with a rich interface, with the detail of individual data points for assays, including fitting procedures to the models, and updates to the modelling part as the science progresses. The examples of use models derived from the data of these can be found e.g. CERAPP [3]Mansouri, K., et al., CERAPP: Collaborative Estrogen Receptor Activity Prediction Project. Environ Health Perspect, 2016. 124(7): p. 1023–33. and CoMPARA [4]Mansouri, K., et al., CoMPARA: Collaborative Modeling Project for Androgen Receptor Activity. Environ Health Perspect, 2020. 128(2): p. 27002. projects. For more details about the ToxCast/Tox21 bioactivity data see lecture of K.P. Friedman, and “ToxCast Chemical Landscape: Paving the Road to the 21st Century Toxicology” [5]Richard, A.M., et al., ToxCast Chemical Landscape: Paving the Road to 21st Century Toxicology. Chem Res Toxicol, 2016. 29(8): p. 1225–51..

Chemical substances added into the underlying DSSTox database are identified based on programmes of interest to the agency and are harvested from regulatory documentation, public domain databases, literature articles and other resources. The manual curation process is to ensure quality mappings between substance identifiers and associated chemical structures has been described in detail [6]Grulke, C.M., et al., EPA's DSSTox database: History of development of a curated chemistry resource supporting computational toxicology research. Comput Toxicol, 2019. 12..

Introduced in April 2016, the Dashboard [7]Williams, A.J., et al., The CompTox Chemistry Dashboard: a community data resource for environmental chemistry. J Cheminform, 2017. 9(1): p.61. was developed with the intention of providing an intuitive, searchable platform that would provide detailed information about the chemical of interest, its properties and structure while also linking to source information for given parameters. Since its initial release multiple layers of functionality have been added with each incremental release. For example, the Executive Summary gives an overview of toxicity-related information such as quantitative values, physicochemical properties, links to known AOPs, or in vitro bioactivity summary plots (see Bisphenol A as an example comptox.epa.gov/dashboard/chemical/executive-summary/DTXSID7020182).

While there are 100s of thousands of experimental data points associated with human and ecological toxicity data (i.e. the ToxVal database), physicochemical properties and fate and transport data, when these data are not available then QSAR prediction models have been used to generate predicted data. These include Toxicity Estimation Software Tool (TEST) predictions (epa.gov/chemical-research/toxicity-estimation-software-tool-test), OPEn structure-activity/property Relationship App (OPERA) [8]Mansouri, K., et al., OPERA models for predicting physicochemical properties and environmental fate endpoints. J Cheminform, 2018. 10(1): p.10. [9]Mansouri, K., et al., Open-source QSAR models for pKa prediction using multiple machine learning approaches. J Cheminform, 2019. 11(1): p.60., as well as commercial models. These prediction models are updated overtime with additional data and new predictions are added into future releases.

The Dashboard also provides access to multiple forms of exposure data including the Chemical and Products Database (CPDat) [10]Dionisio, K.L., et al., The Chemical and Products Database, a resource for exposure-relevant data on chemicals in consumer products. Sci Data, 2018. 5: p. 180125., exposure modelled data based on the Systematic Empirical Evaluation of Models approach (SEEM) [11]Ring, C.L., et al., Consensus Modeling of Median Chemical Intake for the U.S. Population Based on Predictions of Exposure Pathways. Environ Sci Technol, 2019. 53(2): p. 719–732. and predicted functional use [12]Phillips, K.A., et al., High-throughput screening of chemicals as functional substitutes using structure-based classification models. Green Chem, 2017. 19(4): p. 1063–1074.. To support exposure monitoring using mass spectrometry (MS) approaches (including non-targeted analysis) the chemical structures have been processed into a form known as “MS-Ready” [13]McEachran, A.D., et al., "MS-Ready" structures for non-targeted high-resolution mass spectrometry screening studies. J Cheminform, 2018. 10(1): p. 45. and have been used as the basis of many MS studies in recent years [14]McEachran, A.D., et al., Revisiting Five Years of CASMI Contests with EPA Identification Tools. Metabolites, 2020. 10(6). [15]Ulrich, E.M., et al., EPA's non-targeted analysis collaborative trial (ENTACT): genesis, design, and initial findings. Anal Bioanal Chem, 2019. 411(4): p. 853–866..

The Abstract Sifter literature search module [16]Baker, N., T. Knudsen, and A. Williams, Abstract Sifter: a comprehensive front-end system to PubMed. F1000Res, 2017. 6. has also been integrated into the Dashboard as well as searching strategies using other internet sources have been also implemented. These take into account the complexity associated with the many potential synonyms and identifiers associated with chemicals and allows for fine tuning queries based on real-time retrieval of data from PubMed (based on CAS numbers and other identifiers).

There are many flexible searches possible through the dashboard including chemicals (based on names and identifiers such as CAS RN), products and use categories, and assay and gene searches associated with ToxCast/Tox21 assays.

Batch searching [17]Lowe, C.N. and A.J. Williams, Enabling High-Throughput Searches for Multiple Chemical Data Using the U.S.-EPA CompTox Chemicals Dashboard. J Chem Inf Model, 2021. 61(2): p. 565–570. is also supported which allows users to query for data associated with up to ten thousand chemicals (for the present version) and download the data into standard formats including CSV and Excel.

A recent example of how the dashboard can be applied to supporting risk assessment was recently published: “Sourcing data on chemical properties and hazard data from the US-EPA CompTox Chemicals Dashboard: A practical guide for human risk assessment” [18]Williams, A.J., et al., Sourcing data on chemical properties and hazard data from the US-EPA CompTox Chemicals Dashboard: A practical guide for human risk assessment. Environ Int, 2021. 154: p. 106566. .

Footnotes

- ^ Richard, A.M. and C.R. Williams, Distributed structure-searchable toxicity (DSSTox) public database network: a proposal. Mutat Res, 2002 499(1): p. 27–52.

- ^ Grulke, C.M., et al., EPA's DSSTox database: History of development of a curated chemistry resource supporting computational toxicology research. Comput Toxicol, 2019. 12.